Mind and Brain

Over the past century or so, we've learned a lot about the mental processes of producing, perceiving and learning language. This knowledge is detailed and extensive, but in most cases, we do not know how these processes are actually implemented in the brain. Over the same period, we've learned a great deal about the localization of different linguistic abilities in different regions of the brain, and also about how neural computation works in general. However, our understanding of how the brain creates and understands language remains relatively crude. One of today's great scientific challenges is to integrate the results of these two different kinds of investigation -- of the mind and of the brain -- with the goal of bringing both to a deeper level of understanding.

As a concrete example of this mind/brain dichotomy, consider the following. From literally thousands of studies, we know that word frequency has a large effect on mental processing of both speech and text: in all sorts of tasks commoner words are processed more quickly than rarer ones, other things equal. However, we don't know for sure how this is implemented in the brain. Is "neural knowledge" of more common words stored in larger or more widespread chunks of brain tissue? Are the neural representations of common words more widely or strongly connected? Are the resting activation levels of their neural representations simply higher? Are they less efficiently inhibited? Amazingly enough, there is no clear evidence about the relative contributions of these four different different kinds of brain mechanisms to the phenomenon of word frequency effects.

Again, psychological research tells us that there is also a strong recency effect: in all sorts of tasks, words that we've heard or seen recently are processed more quickly. Again, we don't know how the recency effect arises in the brain, nor do we know whether the brain mechanisms underlying the frequency and recency effects are the partly or entirely the same. There is no lack of speculation on these questions, but we honestly just don't know at this point.

This simple example is typical. Very little of what we know about mental processing of speech and language can be translated with confidence into talk about the brain. At the same time, very little of what we know about the neurology of language can now be expressed coherently in terms of what we know about mental processing of language. For example, one of the most striking facts about the neurology of speech and language is lateralization: the fact that the one of the two cerebral hemispheres, usually the left one, plays a dominant role in many aspects of language-related brain function. However, we learn about this only by probing brain function directly -- looking at the symptoms of stroke or head trauma, injecting an anesthetic into the right or left internal carotic artery, imaging cerebral blood flow during the performance of certain language-related tasks, etc. There is nothing obvious in the behavioral or cognitive exploration of linguistic activity that connects to its cerebral lateralization (though we'll see later that there are some interestly non-obvious ideas about this!)

The relation between mind and brain in general is a active "frontier" area of science, in which the potential for progress is very great. The neural correlates of linguistic activity, and the linguistic meaning of neural activity, are especially interesting topics. Reports of current research in this area are often presented at Penn, for example in the meetings of the IRCS/CCN Brain and Language group.

Functional localization of speech and language

Over the past couple of hundred years, most of what we know about how language is processed in the brain has come from studies of the functional consequences of localized brain injury, due to stroke, head trauma or localized degenerative disease. More recently, tools for "functional imaging" of the brain, such as fMRI, PET, MEG and ERP, provide a new sort of evidence about the localization of mental processing in undamaged brains. All of these techniques have their limitations, and so far they have mainly confirmed and refined earlier conceptions rather than revolutionizing them. However, over the next few decades these techniques promise enormous strides in understanding how the brain works in general, and in particular how it creates and understands language.

An excellent and detailed survey for a lay audience of what sorts of processing go on where in the brain, with some speculation about how and why, can be found in William H. Calvin and George A. Ojemann's CONVERSATIONS WITH NEIL'S BRAIN . If you are curious (and most people find the topic fascinating), you should spend some time reading either the on-line version or the published version of this book.

The taxonomy of language-related neurological problems, or aphasia, has been elaborated over the past decades. There are many named aphasic syndromes with clear instructions for differential diagnosis, and a plausible story about how these syndromes are linked to localization of language functions in the brain, and to injuries to various brain tissues. We'll return shortly to a more elaborated table of aphasic syndromes, with connections to diagnostic patterns and likely areas of brain damage, after looking in more detail at the two basic categories of aphasia that were identified by two 19th-century researchers, Paul Broca and Carl Wernicke.

Broca's Aphasia and Wernicke's Aphasia

As a National Institutes of Health information page says:

-

Broca's aphasia results from damage

to the front portion of the language dominant side of the brain. Wernicke's

aphasia results from damage to the back portion of the language dominant

side of the brain.

Aphasia means "partial or total loss of the ability to articulate ideas... due to brain damage."

A note of caution: functional localization varies, sometimes considerably, across individuals. Brain injury (most commonly caused by stroke) is usually widespread enough to affect several different functional areas. Thus each patient is individual both in terms of symptoms and in terms of the correlation of symptoms to area of damage. Nevertheless, there are broad syndromes of deficit-associated-with-local-damage, as described succinctly in the NIH passage above, that are characterized as Broca's and Wernicke's aphasia.

Here is a somewhat more precise picture of the typical placement of Broca's area and Wernicke's area relative to various landmarks of cortical anatomy and physiology:

Broca's aphasia is sometimes called disfluent aphasia or agrammatic aphasia. It is named after Pierre-Paul Broca (1824-1880), a French surgeon and anthropologist who first described the syndrome and its association with injuries to a specific region of the brain.

Agrammatism typically involves laboured speech, and a lack of use of syntax in speech production and comprehension (although patients who present with agrammatic production may not necessarily have agrammatic comprehension).

An example of agrammatic speech:

-

Ah ... Monday ... ah, Dad and Paul

Haney [himself] and Dad ... hospital. Two .. .ah, doctors ... and ah ...

thirty minutes .. .and yes ... ah ... hospital. And, er, Wednesday

... nine o'clock. And er Thursday, ten o'clock .. .doctors. Two doctors

... and ah ... teeth. Yeah, ... fine.

-

M.E. Cinderella...poor...um 'dopted

her...scrubbed floor, um, tidy...poor, um...'dopted...Si-sisters and mother...ball.

Ball, prince um, shoe...

Examiner Keep going.

M.E. Scrubbed and uh washed and un...tidy, uh, sisters and mother, prince, no, prince, yes. Cinderella hooked prince. (Laughs.) Um, um, shoes, um, twelve o'clock ball, finished.

Examiner So what happened in the end?

M.E. Married.

Examiner How does he find her?

M.E. Um, Prince, um, happen to, um...Prince, and Cinderalla meet, um met um met.

Examiner What happened at the ball? They didn't get married at the ball.

M.E.No, um, no...I don't know.

Shoe, um found shoe...

In between the motor strip and Broca's area are the areas known as the

supplementary motor area (SMA) and the premotor cortex, which are said

to be involved in the generation of action sequences from memory that

fit into a precise timing plan All in all, it seems likely that Broca's

area is connected to serialization of coordinated action of the speech

organs. Why do certain syntactic abilities also seem to be localized there?

Perhaps a neural architecture evolved for creating and storing complex

motor plans has been pressed into service to create and store symbolic

rather than purely motoric structures. As Deacon (1991) writes:

-

Human language has effectively colonized

an alien brain in the course of the last two million years. Evolution

makes do with what it has at hand. The structures which language recruited

to its new tasks came to serve under protest, so to speak. They were previously

adapted for neural calculations in different realms and just happened

to exhibit enough overlap with the demands of language processing so as

to make "retraining" and "reorganization" minimally costly in terms of

some as yet unknown evolutionary accounting. Many of the structural peculiarities

of language, its quasi-universals, and the way that it is organized within

the brain no doubt reflect this preexisting scaffolding.

Wernicke's aphasia is sometimes called sensory aphasia or fluent aphasia. The speech of a Wernicke's patient is often a normally-intoned stream of grammatical markers, pronouns, prepositions, articles and auxiliaries, with difficulty in recalling correct content words, especially nouns (anomia). Words may be meaningless neologisms (paraphasia).

The patient in the passage below is trying to describe a picture of a

child taking a cookie.

-

C.B. Uh, well this is the ... the /dodu/ of this.

This and this and this and this. These things going in there like that.

This is /sen/ things here. This one here, these two things here. And the

other one here, back in this one, this one /gesh/ look at this one.

Examiner Yeah, what's happening there?

C.B. I can't tell you what that is, but I know what it is, but I don't now where it is. But I don't know what's under. I know it's you couldn't say it's ... I couldn't say what it is. I couldn't say what that is. This shu-- that should be right in here. That's very bad in there. Anyway, this one here, and that, and that's it. This is the getting in here and that's the getting around here, and that, and that's it. This is getting in here and that's the getting around here, this one and one with this one. And this one, and that's it, isn't it? I don't know what else you'd want.

Wernicke's area is at the boundary of the temporal and parietal lobes, near the parietal lobe association cortex, where cross-modality integration is said performed, and is adjacent to the auditory association cortex in the temporal lobe. Thus Wenicke's aphasia is sometimes called a "receptive" aphasia, by distinction with the "production" aphasia of the motor-system-related Broca's syndrome. However, as the above examples indicate, Wernicke's patients show plenty of problems in producing coherent discourse. Even if Wernicke's area originally served a receptive function, it has been taken over by the linguistic system just as Broca's area has been.

To give you some sense of what the injuries involved in this aphasic

syndromes are like, here is a photo of the excised brain of a Wernicke's

patient:

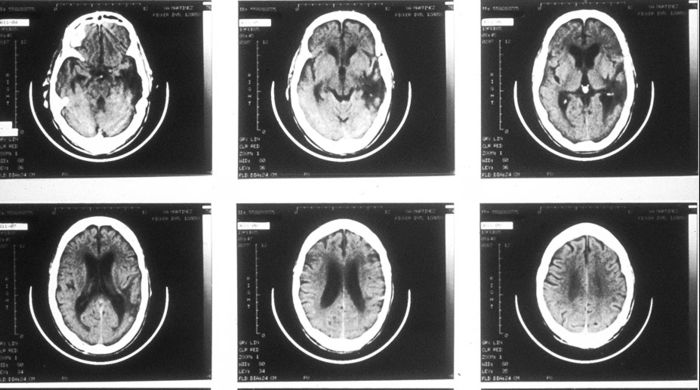

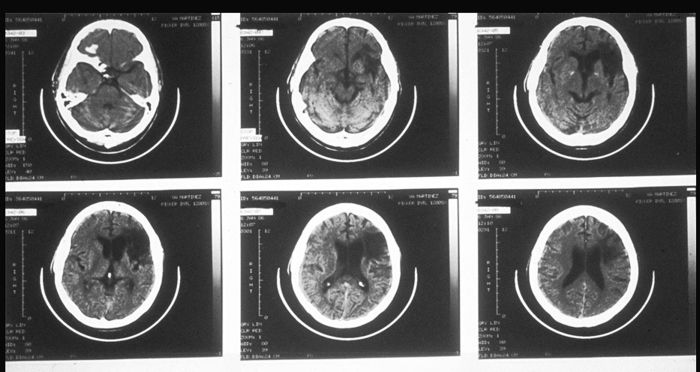

Here is a set of tomographic pictures of a different Wernicke's syndrome brain, showing a series of horizontal slices. The front of the head is towards the top, and the dominant (left) side is on the right, so it is as if we are looking at the brain from the bottom:

Here is a similar set of tomographic pictures of the brain of a Broca's patient:

The main point of these pictures: in these cases, the area of damage is rather large.

A more elaborated taxonomy

The table below shows the relationship of 8 named aphasic syndromes to six general types of symptoms:

| Fluent | Repetition | Comprehension | Naming | Right-side hemiplegia |

Sensory deficits |

|

| Broca | no | poor | good | poor | yes | few |

| Wernicke | yes | poor | poor | poor | no | some |

| Conduction | yes | poor | good | poor | no | some |

| Global | no | poor | poor | poor | yes | yes |

| Transcortical motor |

no | good | good | poor | some | no |

| Transcortical sensory |

yes | good | poor | poor | some | yes |

| Transcortical mixed |

no | good | poor | poor | some | yes |

| Anomia | yes | good | good | poor | no | no |

Conduction aphasia generally results from lesion of the white-matter pathways that connect Wernicke's and Broca's areas, especially the arcuate fasciculus.

Global aphasia results from lesions to both Wernicke's and Broca's areas at once.

The motor and sensory variants of transcortical aphasia are produced by lesions in areas around Broca's and Wernicke's areas, respectively.

There are other syndromes as well, such as "pure word deafness", in which the patient can speak and write more or less normally, but is not able to perceive speech, even though other auditory perception is intact.

In actual clinical diagnosis, more elaborate batteries of tests are commonly given in order to assess language function in more detail, and the detailed locations of lesions can be found by MRI imaging.

Connecting mind and brain? the declarative/procedural model

We began this lecture by stressing the apparent dissociation between the phenomena of "language in the mind" and the phenomena of "language in the brain." We'll end it with a brief presentation of an idea that ties the observations about brain localization of language to many other aspects of brain function, and at the same time makes contact with some of the most basic distinctions in the cognitive architecture of language. This idea has been proposed by Michael Ullman and his collaborators, under the name of the "declarative/procedural model."

Others have proposed a distinction between declarative memories and procedural memories. Declarative memory is memory for facts, like the color of a peach; procedural memory is memory for skills, like riding a bicycle. The declarative memory system is specialized for learning and processing arbitrarily-related information, and is based in temporal (and temporal/parietal) lobe structures. The procedural memory system is specialized for non-conscious learning and control of motor and cognitive skills, which involve chaining of events in time sequence, and is based in frontal/basal-ganglia circuits. Building on this earlier distinction, Ullman proposes that what we think of as lexical knowledge (the association of meaning and sound for morphemes, irregular wordforms and fixed or idiomatic phrases) is crucially linked with the declarative, temporal-lobe system, while what we think of as grammatical knowledge (productive methods for real-time sequencing of lexical elements) is crucially linked to the procedural, frontal/basal-ganglia system.

Ullman argues that declarative and lexical memory both involve learning arbitrary conceptual/semantic relations; that the knowledge involved is explicit, i.e. relatively accessible to consciousness; and that they involve lateral/inferior temporal-lobe structures for already-consolidated knowledge, and medial temporal-lobe structures for new knowledge. By contrast, procedural and grammatical memory both involve coordination of procedures in real time and computation of sequential structures;the knowledge involved in both tends to be implicit and encapsulated, so that it is relatively inaccessible to consciousness examination and control; and both involve frontal and basal ganglia structures in the dominant hemisphere.

We can see this as a detailed elaboration of the old observation that Wenicke's area is adjacent to primary auditory cortex, in the direction of visual cortex and cross-modal association areas, while Broca's area is adjacent to the portion of the motor strip that controls the vocal organs. The declarative/procedural model is supported by a wide variety of interesting, specific and sometimes unexpected evidence, coming from psycholinguistic studies, developmental studies, neurological cases, functional imaging studies and neurophysiological observations.